High availability storage keeps shared data online when a server, disk, or VM host fails, reducing downtime for applications that depend on a common filesystem. Replicating the same data across multiple nodes means a single-node outage does not immediately become a data-loss event. GlusterFS provides a practical way to build redundant storage using ordinary Linux servers.

In a GlusterFS cluster, storage nodes join a trusted pool and contribute one or more bricks (directories backed by a filesystem). Bricks are combined into a volume, and a replicated volume writes the same data to multiple bricks so any surviving replica can satisfy reads. Clients mount the volume using the glusterfs mount helper, which fetches volume metadata from a server in the pool and then talks directly to the bricks.

High availability depends on replica count, network stability, and consistent brick mounts across reboots. A dedicated brick filesystem (commonly XFS) avoids mixing OS I/O with storage I/O, and an unmounted brick after restart can leave a volume degraded. Replication improves resilience but is not a backup, and a two-node replica can suffer split-brain during partitions, so planning the volume type and node count is critical.

Steps to set up a high availability storage cluster with GlusterFS:

- Install GlusterFS on each storage node.

Keep the same GlusterFS major version on every node in the pool.

- Identify the partition that will hold GlusterFS brick data on each node.

$ lsblk -o NAME,SIZE,FSTYPE,MOUNTPOINT NAME SIZE FSTYPE MOUNTPOINT sda 80G ext4 / sdb 200G └─sdb1 200G

- Format the brick partition with XFS on each node.

$ sudo mkfs.xfs -f /dev/sdb1 meta-data=/dev/sdb1 isize=512 agcount=4, agsize=13107200 blks ##### snipped ##### realtime =none extsz=4096 blocks=0, rtextents=0

Formatting destroys all data on the target partition.

- Create a mount point for the brick filesystem on each node.

$ sudo mkdir -p /gluster/brick1

- Mount the brick filesystem on each node.

$ sudo mount -t xfs /dev/sdb1 /gluster/brick1

Use a dedicated brick mount to avoid --force volume creation and to keep storage I/O isolated from the OS.

- Add the brick filesystem to /etc/fstab on each node for automatic mounting.

$ sudo blkid /dev/sdb1 /dev/sdb1: UUID="0c3e4c7a-2a6e-4e4a-a5d4-8b6b4a9a9c2f" TYPE="xfs"

/etc/fstab UUID=0c3e4c7a-2a6e-4e4a-a5d4-8b6b4a9a9c2f /gluster/brick1 xfs defaults 0 0

A wrong entry in /etc/fstab can prevent boot; validate with sudo mount -a before reboot.

- Validate the /etc/fstab entry on each node.

$ sudo mount -a $ findmnt /gluster/brick1 TARGET SOURCE FSTYPE OPTIONS /gluster/brick1 /dev/sdb1 xfs rw,relatime

- Create an empty brick directory for the volume on each node.

$ sudo mkdir -p /gluster/brick1/gv0

Brick directories must be empty when added to a volume.

- Probe each peer node from a single node to create the trusted pool.

$ sudo gluster peer probe gfs2 peer probe: success $ sudo gluster peer probe gfs3 peer probe: success

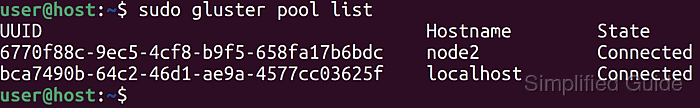

- Confirm every peer is connected in the pool.

$ sudo gluster pool list UUID Hostname State 7d7b9d42-6a8f-4f4a-9d1e-8f4b3b2e3a10 gfs1 Connected b3c5f9e1-8b1e-4a6a-8b5d-7d1d9c0e1f22 gfs2 Connected 0a1b2c3d-4e5f-6789-0abc-def123456789 gfs3 Connected

- Create a replicated GlusterFS volume across the brick directories.

$ sudo gluster volume create gv0 replica 3 transport tcp \ gfs1:/gluster/brick1/gv0 \ gfs2:/gluster/brick1/gv0 \ gfs3:/gluster/brick1/gv0 volume create: gv0: success: please start the volume to access data

Replica 3 survives the loss of one storage node.

Related: How to create a GlusterFS volume

- Start the new volume.

$ sudo gluster volume start gv0 volume start: gv0: success

- Verify all bricks are online for the volume.

$ sudo gluster volume status gv0 Status of volume: gv0 Gluster process TCP Port Online Pid Brick gfs1:/gluster/brick1/gv0 49152 Y 1432 Brick gfs2:/gluster/brick1/gv0 49152 Y 1388 Brick gfs3:/gluster/brick1/gv0 49152 Y 1410

- Create a mount point for the volume on a client.

$ sudo mkdir -p /mnt/gv0

- Mount the GlusterFS volume on the client.

$ sudo mount -t glusterfs gfs1:/gv0 /mnt/gv0

The mount source can be any server in the trusted pool.

- Confirm read/write access through the mount.

$ echo ok | sudo tee /mnt/gv0/healthcheck.txt ok $ cat /mnt/gv0/healthcheck.txt ok

A practical HA sanity check mounts the same volume from a second client and confirms healthcheck.txt appears immediately.

Mohd Shakir Zakaria is a cloud architect with deep roots in software development and open-source advocacy. Certified in AWS, Red Hat, VMware, ITIL, and Linux, he specializes in designing and managing robust cloud and on-premises infrastructures.