Table of Contents

How to benchmark a web server with ApacheBench (ab)

Benchmarking a web server shows how quickly a single endpoint responds and how latency or errors change as concurrency rises. A short, controlled ApacheBench run helps confirm whether a code, config, or infrastructure change improved capacity, introduced a regression, or pushed the service beyond a safe request rate.

ApacheBench (ab) opens multiple client connections to one URL and repeats the same request until it reaches a target request count (-n) or a fixed time limit (-t). Its report highlights fields such as Requests per second, Time per request, transfer rate, and percentile timing so the same scenario can be compared across repeated runs.

Because ab benchmarks one URL at a time and only partially implements HTTP/1.x, it is best for quick endpoint checks rather than browser-like traffic patterns. Use a URL with an explicit path such as

http://host.example.net/

, start with low concurrency so the client host does not become the bottleneck, and run higher-load tests only where permission exists.

Related: How to authenticate with a bearer token in cURL

Related: How to send custom headers with wget

Related: How to test Apache configuration

Steps to benchmark a web server with ApacheBench (ab):

- Choose the exact endpoint to benchmark and include a path, even when the path is only a trailing slash.

ab rejects URLs that stop at the host name.

$ ab -n 1 -c 1 http://host.example.net ab: invalid URL Usage: ab [options] [http[s]://]hostname[:port]/path ##### snipped #####

- Run a small baseline test first to confirm the endpoint is reachable and returns clean results.

$ ab -n 20 -c 2 http://host.example.net/ ##### snipped ##### Complete requests: 20 Failed requests: 0

If this first run shows failures or non-2xx responses, fix correctness before increasing load.

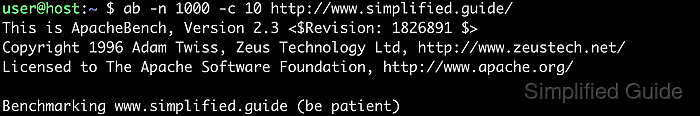

- Run the first repeatable benchmark with an explicit request count and concurrency level.

$ ab -n 1000 -c 10 http://host.example.net/ This is ApacheBench, Version 2.3 <$Revision: 1913912 $> ##### snipped ##### Concurrency Level: 10 Time taken for tests: 0.674 seconds Complete requests: 1000 Failed requests: 0 Requests per second: 1483.21 [#/sec] (mean) Time per request: 6.742 [ms] (mean) Time per request: 0.674 [ms] (mean, across all concurrent requests) Transfer rate: 3121.43 [Kbytes/sec] received

Common pattern: ab -n N -c C [-k] http://host/.

- Read the report before changing the workload.

Requests per second is throughput, the first Time per request line is end-to-end latency per request, and the second Time per request line divides the total test time across the concurrency level.

- Increase -n and -c in deliberate steps after the baseline is clean.

$ ab -n 10000 -c 50 http://host.example.net/

Large concurrency values can overwhelm the target or saturate the client machine, so coordinate production testing and increase load gradually.

- Add KeepAlive when you want to measure connection reuse instead of a fresh TCP or TLS setup for each request.

$ ab -n 10000 -c 50 -k http://host.example.net/

-k is useful when the application normally serves traffic over persistent connections.

- Use a time-boxed run when each scenario should execute for the same duration rather than the same request count.

$ ab -t 30 -c 20 -k http://host.example.net/

-t caps the benchmark duration and internally implies a large request count so the timer ends the run.

- Accept variable response sizes on dynamic pages with -l.

$ ab -n 2000 -c 20 -l http://host.example.net/

Without -l, a changing response size usually appears as a length failure even when the application is behaving normally.

- Add headers when the test depends on a virtual host, API token, or other request metadata.

$ ab -n 1000 -c 20 -H 'Host: host.example.net' -H 'Authorization: Bearer REDACTED' http://203.0.113.10/

Repeat -H for additional headers, and use -A user:pass when the endpoint requires HTTP Basic authentication.

- Write the request body to a file before benchmarking a POST or PUT endpoint.

$ cat > payload.json <<'EOF' {"message":"hello"} EOF - Send the saved body with the correct content type header.

$ ab -n 500 -c 10 -p payload.json -T 'application/json' http://host.example.net/api/

Benchmarking write operations can create or modify data, so use an idempotent endpoint or a disposable test environment.

- Export percentile and per-request timing data when the results need plotting or later analysis.

$ ab -n 5000 -c 50 -k -e percentiles.csv -g times.tsv http://host.example.net/

-e writes percentile data as CSV, while -g writes tab-separated per-request timing data.

- Save each benchmark run to a file so the same metrics can be compared later.

$ ab -n 5000 -c 50 -k http://host.example.net/ > ab-5000-50-k.txt $ grep -E 'Requests per second|Time per request|Failed requests|Non-2xx responses' ab-5000-50-k.txt Failed requests: 0 Requests per second: 34414.88 [#/sec] (mean) Time per request: 1.453 [ms] (mean) Time per request: 0.029 [ms] (mean, across all concurrent requests)

- Re-run the same scenarios after each server change, and use

ab -h

when you need additional flags such as -s, -q, or -r.

Keep the URL, headers, body, concurrency, and network path the same when comparing one run against another.

Tips for benchmarking a web server with ApacheBench (ab):

- Run ApacheBench from a separate machine when possible so the benchmark client does not consume the server's own CPU, memory, or network bandwidth.

- Prefer a staging environment or an approved maintenance window for aggressive concurrency tests.

- Warm up caches with a short run before collecting the numbers that will be compared.

- Keep -n as a multiple of -c when practical so work is distributed evenly across concurrent requests.

- Use -q to suppress progress output on large runs and -s to fail faster when the server stalls.

- Use -r only when you intentionally want the run to continue after socket receive errors.

- Monitor CPU, memory, network throughput, and storage latency on both the client and the server to identify the real bottleneck.

- Repeat the same scenario several times and compare medians or percentile bands instead of trusting a single run.

- Test with and without -k because persistent connections can materially change latency and throughput.

- Use -l only when the endpoint legitimately returns different body lengths across otherwise successful responses.

- Remember that ab does not model full browser waterfalls or HTTP/2 or HTTP/3 user traffic, so use broader test tools when those behaviors matter.

- Keep a record of the exact URL, headers, payload, concurrency, and server-side changes for every run you plan to compare later.