Large files are common causes of sudden disk pressure on servers, application hosts, and backup targets. Finding the biggest files quickly makes it easier to confirm whether logs, archives, database dumps, or virtual disk images are responsible before the filesystem fills completely.

In Linux, find can walk a directory tree, limit the result to regular files, and print each file size with its path. Sorting that output in descending order produces a ranked list of the biggest files under a target path, and numfmt can reformat the byte counts into easier units without changing the underlying order.

Large-tree scans can take time and often return files that should not be removed directly, such as active database files, container layers, or virtual machine images. -xdev keeps a scan on one filesystem, sudo may be needed under protected paths, and the %s value printed by find is the file's apparent size in bytes, which can differ from the actual disk blocks used by sparse files.

Steps to find the largest files with find in Linux:

- List the largest files under the target path and keep the scan on one filesystem.

$ find /srv/audit -xdev -type f -printf '%s %p\n' 2>/dev/null | sort -nr | head -n 6 440401920 /srv/audit/backups/db-backup.img 188743680 /srv/audit/images/archive.tar 100663296 /srv/audit/logs/app.log 12582912 /srv/audit/logs/app.log.1

%s prints the file's apparent size in bytes, and sort -nr keeps the largest result at the top. Replace /srv/audit with the mountpoint or application path that is filling up.

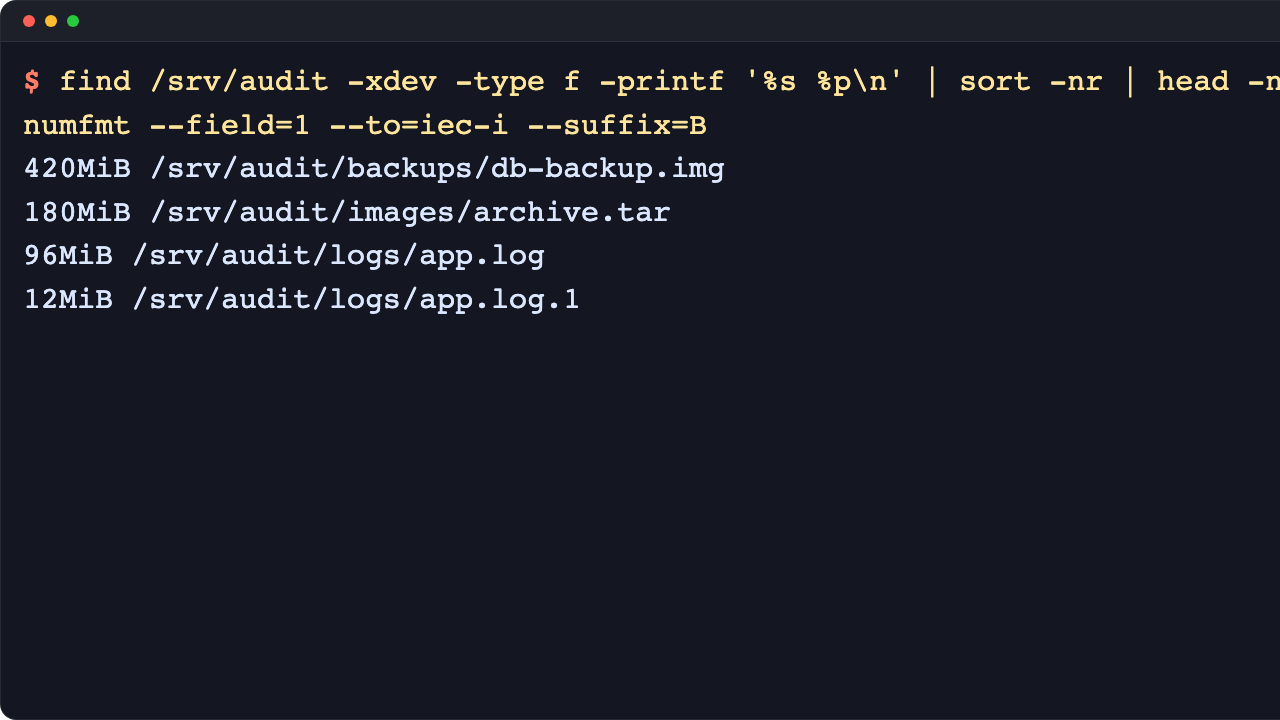

- Reformat the size column into human-readable units when raw byte counts are harder to scan.

$ find /srv/audit -xdev -type f -printf '%s %p\n' 2>/dev/null | sort -nr | head -n 6 | numfmt --field=1 --to=iec-i --suffix=B 420MiB /srv/audit/backups/db-backup.img 180MiB /srv/audit/images/archive.tar 96MiB /srv/audit/logs/app.log 12MiB /srv/audit/logs/app.log.1

numfmt is part of GNU coreutils. Omit the final pipe when exact byte counts are needed for reporting or comparison.

- Scan the root filesystem when the full partition is unknown but other mounted filesystems should be skipped.

$ sudo find / -xdev -type f -printf '%s %p\n' 2>/dev/null | sort -nr | head -n 10 | numfmt --field=1 --to=iec-i --suffix=B

-xdev prevents the scan from crossing into separate mounts such as /home, /var, network storage, or removable media. Repeat the command on another mountpoint when that filesystem is the one running out of space.

- Focus on newly grown files when the disk usage increase happened recently.

$ find /srv/audit -xdev -type f -mmin -60 -printf '%s %TY-%Tm-%Td %TH:%TM %p\n' 2>/dev/null | sort -nr 188743680 2026-04-14 03:58 /srv/audit/images/archive.tar 100663296 2026-04-14 04:16 /srv/audit/logs/app.log 12582912 2026-04-14 04:28 /srv/audit/logs/app.log.1

-mmin -60 limits the result to files modified in the last hour. Use -mtime -1 for the last 24 hours or adjust the minute window to match when the disk spike started.

- Inspect a candidate file before deciding how to clean it up.

$ ls -lh --time-style=long-iso /srv/audit/backups/db-backup.img -rw-r--r-- 1 root root 420M 2026-04-14 01:28 /srv/audit/backups/db-backup.img

Large files from databases, containers, virtual machines, and active log pipelines often need application-specific cleanup rather than direct removal. When actual disk blocks matter more than apparent size, compare the same path with du -h before taking action.

Mohd Shakir Zakaria is a cloud architect with deep roots in software development and open-source advocacy. Certified in AWS, Red Hat, VMware, ITIL, and Linux, he specializes in designing and managing robust cloud and on-premises infrastructures.